Use this cross-entropy loss for binary (0 or 1) classification applications. dimension Activation function full neighborhood sampling 0.001 256 128 ReLU 0.2 Dropout Loss function Optimizer CrossEntropyLoss Adam optimizer. For CrossEntropyLossLayer, the input and target should be scalar values between 0 and 1, or arrays of these. Computes the cross-entropy loss between true labels and predicted labels.

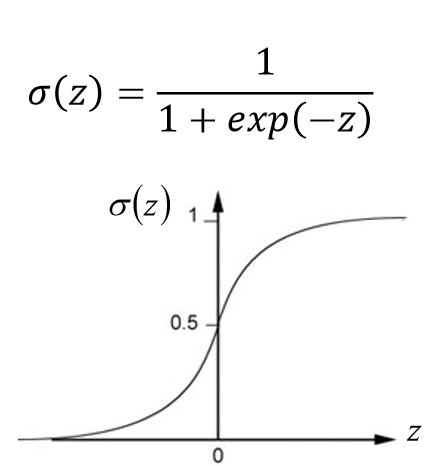

When operating on multidimensional inputs, CrossEntropyLossLayer effectively threads over any extra array dimensions to produce an array of losses and returns the mean of these losses.Important point to note is when gamma 0 0, Focal Loss becomes Cross-Entropy Loss. I have a good intuition about the categorialcrossentropy loss function, which is defined as follows: J(w) 1 N i1N yilog(yi) + (1 yi)log(1 yi) J ( w) 1 N i 1 N y i log ( y i) + ( 1 y i) log ( 1 y i) where, w w refer to the model parameters, e.g. Cross-entropy loss increases as the predicted probability diverges from the actual label. The only difference between original Cross-Entropy Loss and Focal Loss are these hyperparameters: alpha ( alpha ) and gamma ( gamma ). Real array of rank n or integer array of rank n-1 To perform a Logistic Regression in PyTorch you need 3 things: Labels (targets) encoded as 0 or 1 Sigmoid activation on last layer, so the num of outputs will be 1 Binary Cross Entropy as Loss function. Cross-Entropy Cross-entropy loss, or log loss, measures the performance of a classification model whose output is a probability value between 0 and 1. Comparing to the previous works in FGIR, we obtain the best performance on CARS196 and CUB-200-2011. CrossEntropyLossLayer exposes the following ports for use in NetGraph etc.: put model in train mode and enable gradient calculation ain() tgradenabled(True) for batchidx, batch in enumerate(traindataloader): loss. The Piecewise Cross Entropy loss cannot only improve the performances in FGIR and FGVC without extra computation in testing stage, but also simply implement.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed